|

Apr 08, 2013 Running Hadoop on cygwin in windows (Single-Node Cluster) In this document you are going to see how you can setup pseudo-distributed, single-node Hadoop (any stable version 1.0.X) cluster backed by the Hadoop Distributed File System, running on windows ( I am using Windows VISTA). Cygwin command shell prompt in windows system without the need to understand or learn the new commands. Cygwin is a POSIX (Portable Operating System Interface for UNIX) 5. Cygwin is able to work with any of 32 bit or 64 bit system. Cygwin packages are selected at the installation as per the requirement.

Install CygwinCygwin comes with a normal setup.exe to install in Windows, but there are a couple steps you will need to pay attention to, so we will walk you through the installation.To keep the installation small while saving bandwidth for you and Cygwin, the default installer will download only the files you need from the internet.The default install path is C:Cygwin but if you don’t like to have programs installed on the root of your C: drive you can change the path or.Click next until you come to a download mirror selection. Unfortunately, the installer does not say where the mirrors are located so in most cases you might as well just guess which mirror works best.After you have selected a mirror, the installer will download a list of available packages for you to install.

Here is where things get a bit more intimidating.There will be hundreds of packages available separated by multiple different categories. If you don’t know what the package is you can leave the default selection and install additional packages later by running the installer again. If you know what package you need, you can search for it and the results will be automatically filtered.Once you click next, it will take a little while to download all the selected tools and then finish the installation. Add Cygwin Path to Windows Environment VariableAfter the installation you will have a Cygwin icon on your desktop that you can launch to open the Cygwin terminal.This terminal starts in the C:Cygwinhome folder but that isn’t particularly useful because you probably don’t have any files stored there.

In this tutorial, we will take you through step by step process to install Apache Hadoop on a Linux box (Ubuntu). This is 2 part process

There are 2 Prerequisites

Part 1) Download and Install Hadoop

Step 1) Add a Hadoop system user using below command

Enter your password, name and other details.

NOTE: There is a possibility of below-mentioned error in this setup and installation process.

'hduser is not in the sudoers file. This incident will be reported.'

This error can be resolved by Login as a root user

Execute the command

Step 2) Configure SSH

In order to manage nodes in a cluster, Hadoop requires SSH access

First, switch user, enter the following command

This command will create a new key.

Enable SSH access to local machine using this key.

Now test SSH setup by connecting to localhost as 'hduser' user.

Note: Please note, if you see below error in response to 'ssh localhost', then there is a possibility that SSH is not available on this system-

To resolve this -

Purge SSH using,

It is good practice to purge before the start of installation

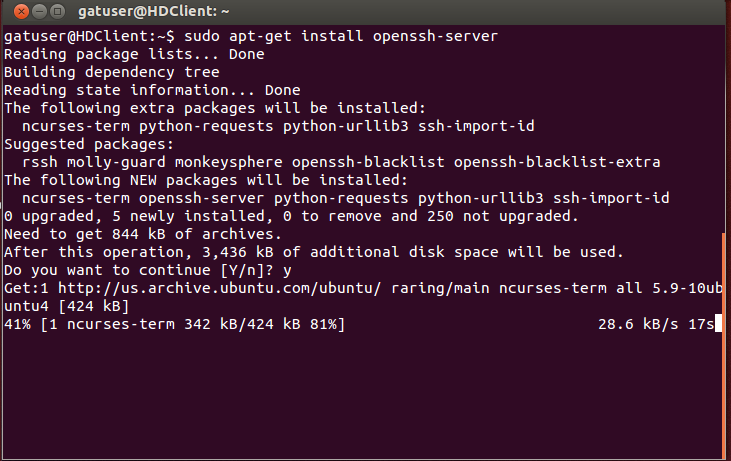

Install SSH using the command-

Step 3) Next step is to Download Hadoop

Select Stable

Select the tar.gz file ( not the file with src)

Once a download is complete, navigate to the directory containing the tar file

Enter,

Now, rename hadoop-2.2.0 as hadoop

Part 2) Configure Hadoop

Step 1) Modify ~/.bashrc file

Add following lines to end of file ~/.bashrc

Now, source this environment configuration using below command

Step 2) Configurations related to HDFS

Set JAVA_HOME inside file $HADOOP_HOME/etc/hadoop/hadoop-env.sh

With

There are two parameters in $HADOOP_HOME/etc/hadoop/core-site.xml which need to be set-

1.'hadoop.tmp.dir' - Used to specify a directory which will be used by Hadoop to store its data files.

2. 'fs.default.name' - This specifies the default file system.

To set these parameters, open core-site.xml

Copy below line in between tags <configuration></configuration>

Navigate to the directory $HADOOP_HOME/etc/Hadoop

Now, create the directory mentioned in core-site.xml

Grant permissions to the directory

Step 3) Map Reduce Configuration

Before you begin with these configurations, lets set HADOOP_HOME path

And Enter

Next enter

Exit the Terminal and restart again

Type echo $HADOOP_HOME. To verify the path

Now copy files

Open the mapred-site.xml file

Add below lines of setting in between tags <configuration> and </configuration>

Open $HADOOP_HOME/etc/hadoop/hdfs-site.xml as below,

Add below lines of setting between tags <configuration> and </configuration>

Create a directory specified in above setting-

Step 4) Before we start Hadoop for the first time, format HDFS using below command

Step 5) Start Hadoop single node cluster using below command

An output of above command

Using 'jps' tool/command, verify whether all the Hadoop related processes are running or not.

If Hadoop has started successfully then an output of jps should show NameNode, NodeManager, ResourceManager, SecondaryNameNode, DataNode.

Step 6) Stopping Hadoop

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

February 2023

Categories |

RSS Feed

RSS Feed